Six Pitfalls That Break AI-Enhanced Processes

Challenges, look-fors, and a checklist you can use in class

Listen to this article

Here is an audio recording of me reading the article below. Listen to it on your drive home, on your phone like a podcast, or follow along while you read.

TL;DR

II’m back after a break for Chinese New Year!

Process-based teaching is meant to protect thinking. With AI in the classroom, it can accidentally protect the appearance of thinking instead. But before any process can hold, the essential conditions for learning have to be in place. This article looks at both through a self-determination lens: the load-bearing walls a classroom needs, and the six predictable ways a process still breaks down even when they’re standing.

Intro

If you’ve seen me speak, you’ve heard me say that AI doesn’t create new problems. It reveals old ones.

Take one that’s been hiding in plain sight: we have long valued the static artifact of a finished essay over the messy, cognitive act of actually constructing an argument. When a student can generate something that looks finished in seconds, old habits get exposed. When the essential conditions for real learning aren’t in place, students learn quickly that what matters is to look finished, not to think deeply, or even to know what that looks like. And when a dysfunctional system meets a tool that can produce polish without effort, it shows its hand.

Process-based teaching is meant to protect thinking. In the AI era, it can accidentally protect the appearance of thinking. And here’s the uncomfortable part: most teachers think of themselves as process people. They believe in the work. They value thinking over polish. The problem isn’t bad intentions or misplaced values. It’s the behavioral implementation gap between believing in process and actually designing for it.

That gap is what separates an AI-enhanced educator from a slop-enabling one. Not philosophy. Design.

This article is a list of the six predictable ways process-based learning breaks down with AI, plus the early look-fors that tell you it’s happening.

Before we start, great process design requires the essentials to be in place first. I didn’t invent those essentials. Deci and Ryan’s “Self-Determination Theory” (SDT) names three of them: autonomy, competence, and relatedness. That third one is worth pausing on, because relatedness is the term that tends to trip people up. In SDT, it refers to the felt sense of being connected to the people around you, of mattering to others, and having others matter to you. But in a classroom context, relatedness fails in two distinct ways that are easy to confuse. A student can feel genuinely cared for by their teacher and still have no personal stake in the work. And a task can connect to the real world and still land in a room where a student doesn’t feel seen or cared for by their teacher.

So I’ve split relatedness into two walls, drawing on Zaretta Hammond’s work in Culturally Responsive Teaching and the Brain: belonging, the felt sense that a student is known in the room, not just present in it, and relevance, my own interpretation of her ideas around cultural connections to learning: the sense that this particular work has something to do with a student’s own life, community, and experience.

So: Belonging. Competence. Relevance. Autonomy. These are the load-bearing walls of a classroom, as I see it. A student who doesn’t feel seen will feel invisible in the learning. A student who finds the task too difficult will use AI as relief. A student with no personal stake in the work will use AI as the fastest way out of what looks like a checklist. Process design without these walls just creates more elaborate hoops to jump through.

So the conditions come first. But here’s the harder truth: even when they’re strong, a process can still fail. Small design mistakes compound fast when “finished work” is one prompt away. That’s what this article is about. Here’s how processes break.

Six Common Pitfalls of AI-Enhanced Processes

1) Vague language leads to vague behavior

Every breakdown in this pitfall starts with unclear communication. When teachers say “AI allowed” without defining what that means, and when schools frame AI as “just a tool” without examining what that metaphor actually teaches, students are left to interpret expectations that were never made explicit. That’s not an academic honesty problem, though many teachers see it as one. For many students, it’s actually a communication problem.

“AI allowed” without a definition leaves students guessing. Some use AI for idea support. Others outsource the whole task. Mismatched voices, “gotcha” moments, and disputes are the predictable result of thinking expectations that were never stated clearly in the first place.

The same problem lives in the metaphors we use in the classroom. Tool language is genuinely useful early on. It makes AI feel manageable, puts responsibility back on the human, and gives schools a practical framework for early questions: What is this for? Who should use it? When does it help? But tools are neutral. Generative AI is not. It pushes back, persuades, and carries patterns from its training data in ways a hammer never could.

Framing AI as a guest collaborator picks up where tool language stops, and it carries a different set of values with it. A guest has a role, operates within norms, and is welcome but not in charge. But more than that, a guest changes the nature of the work. Learning isn’t transmitted from AI to student. It’s constructed through the interaction: the push and pull of a draft that gets questioned, an argument that gets challenged, an idea that gets stress-tested and comes back stronger. The guest doesn’t write your essay. The guest reacts to your draft, pushes back on your argument, and asks what you actually meant. That’s a co-learning relationship that we like and want to continue to instill in young people.

There’s a relevance cost to vague language, too. When task language is generic, it signals that any student could have received this assignment. It doesn’t invite students to bring their own experience, community, or perspective into the thinking, and it doesn’t honor or celebrate the families, values, and identities they carry into the room. A student who doesn’t see themselves in the work has no particular reason to do the cognitive heavy lifting when AI can produce something plausible without any of it.

Spot the pitfall early:

You might see “AI allowed” in the task brief, and nothing specific beyond that. Fix it with one concrete allowed/not-allowed example and a short why. Then ask yourself one more question: does the task brief invite students to bring their own experience, community, or identity into the thinking? Generic language and vague AI permissions tend to arrive together, and they send the same message: this work wasn't designed with you in mind. Students need to see what your expectations look like in action, and they need to see themselves in the task.

You might hear students say “I just used it to clean it up,” “you never said we couldn’t,” “just tell me what to do,” or “does it have to be in my own words?” Fix it with precision: name the step in your process where AI enters, and name who owns the decisions. “In this step, use AI to draft two alternative structures. In the next step, the decisions are yours.” And model it first: “Here’s how I used AI this week and what I noticed.” That positions you as a co-learner and shows students what honest reflection on AI use actually sounds like.

You might notice students describing AI's role in completely different ways in the middle of a task that you thought had clear expectations. That's not a dishonesty problem. It's a norming problem: the class never built shared language around what AI's role actually is in this process. Fix it by making that conversation public. Post a one-sentence class agreement on the wall: “In this task, AI is a guest at the drafting stage. The decisions stay with the writer.”

2) Documentation serves policing rather than learning

A process designed to protect academic integrity isn’t automatically a bad thing. But when documentation serves the teacher’s need to prove honesty rather than the student’s need to see their own growth, the purpose gets inverted. Breadcrumbs that could show a learner how far their thinking has traveled become evidence in a case. A folio that could make effort visible and meaningful becomes a paper trail. And students who already weren’t sure they belonged in the room now have confirmation that they’re being watched rather than supported or coached. They’ll invest in not getting caught rather than in the learning.

The better question isn’t “how do we catch them?” It’s “what have we designed that makes outsourcing feel logical?” If the assignment is product-only and thinking never has to show up during the work, detection won’t fix the design problem. A task that hides thinking produces students who hide AI use. That’s a design flaw, not a character flaw. The stronger move is a process so intentional that AI use is built in, expected at a specific moment, and tied to a specific cognitive move. When the teacher defines where AI enters and what the student has to do with it, there’s nothing to hide. There’s also no ambiguity about where the effort needs to be exerted. A rigorous system of thought (I love that phrase) doesn’t leave room for shortcuts because it already accounts for them.

Spot the pitfall early:

You might see process steps treated as proof rather than support, and AI use that is hidden or defensive rather than disclosed. Fix it by making transparency the default: share the prompts you used to build a task, the output you rejected, and the decisions you made. Ask students to follow your example.

You might hear students say “I didn’t know we had to document that,” “the AI just helped me format it,” or go quiet when you ask them to walk you through their process. Fix it by building documentation into the process itself: “Your note should include one place where AI pushed your thinking and one place where you pushed back on AI.”

You might notice more energy going into catching AI use than into redesigning the tasks that produce slop in the first place. Fix it by co-creating norms with students and naming the purpose: “We document AI use so you can see your own thinking grow, not so I can audit it.” That shifts your role from enforcer to mentor.

3) The process becomes a ritual without meaning

A process with named steps isn’t automatically a thinking process. If students can’t explain why a step exists or what cognitive move it requires, they’ll complete it the same way they’d fill out a form: accurately and meaninglessly to efficiently complete the task. A student can brainstorm with AI, select an idea, and move on without making a single deliberate cognitive move. The brainstorming happened, but the thinking didn’t.

Remember the walls of the classroom in the opening? Well, in the case that students follow a process without effort, it’s likely a competence issue. A student who can follow steps but can’t name their thinking doesn’t feel capable; they feel procedurally compliant. And a student who can’t see what their effort is actually producing has no reason to invest more of it.

One example I have experienced as a student is “reflection”. It was something many of my teachers had me perform over my academic career and while it was a powerful learning strategy, I wouldn’t say I ever understood why it was important or how to do it well. Today, students could easily say: bah, I’ll just get ChatGPT to whip something up and skip this nuisance of a reflection, it doesn’t offer me new insights.

Spot the pitfall early:

You might see students complete every step but struggle to explain what thinking they actually did, or why the step mattered. Fix it by adding a “name the thinking” prompt at transitions: “Before you move to the next step, write what you just decided and why.”

You might hear students say “I just did what the step said” or “I wasn’t sure what I was supposed to be thinking about” when you ask them to walk you through their process. Fix it by giving students language they can actually use: “I accepted this because...” or “I pushed back here because...” A shared anchor chart of thinking moves with verbs and brief definitions externalizes the invisible. When students can name what they’re doing cognitively, they’re more likely to do it intentionally. Without that language, thinking stays implicit and the step stays mechanical.

You might notice transition points passing without anyone surfacing what just happened cognitively. Fix it by talking with students about why certain thinking moves matter: “Why do we reflect? How does it help us hold onto what we just experienced?” When students understand the science behind the step, the step stops feeling like a hoop.

4) Documentation as post-mortem autopsies

This pitfall is related to the second one but distinct from it. Pitfall 2 is about why documentation exists: when it’s designed to catch rather than coach, it becomes policing. This pitfall is about documentation happens: even well-intentioned documentation fails if it arrives too late. A teacher can genuinely want to support student growth and still fall into this trap by collecting everything after the work is done.

This is where autonomy matters, and where formative assessment becomes the practical alternative. Documentation that lives after the work is done belongs to the teacher’s evaluation, not the student’s learning. But documentation designed as connected tasks during the work, each one building on the last, creates something different: a visible record of thinking developing over time. That’s where feedback does its most powerful work. Not as a judgment on a finished product, but as a response to thinking in motion. A teacher who can see a note about a decision from day one, an annotation from day three, and a concept map from day five can give feedback that actually moves the learner forward. Breadcrumbs are invitations for formative response.

Spot the pitfall early:

You might see process artifacts that only exist at submission, with no thinking trail built during the work itself. Fix it by requiring tiny breadcrumbs in the moment: “Annotate one place in your draft where you disagreed with AI and explain what you did instead.”

You might hear students say “I already finished it” when you ask for a decision note, or notice that the only documentation in the room is happening at submission. Fix it by building checkpoints during work time: “At the halfway point, drop a decision note: what have you changed since you started and why?” Breadcrumbs built during the work are invitations for formative response.

You might notice grades that still signal product matters and process is optional, with no weight given to how thinking developed over time. Fix it by including a short “defend your choices” moment: “In your pair share, explain one decision your breadcrumbs show that your final product doesn’t.” Consider delaying the grade until the process is complete and feedback has been acted upon.

5) The process is too rigid or too loose

Under pressure for certainty, processes start to resemble algorithms: fixed sequences meant to produce predictable results. Every student does the same steps in the same order at the same pace. But the opposite failure is just as common. A process with no real structure or access to the teacher leaves students guessing what the teacher wants, and when students are confused, AI becomes the fastest way to resolve the uncertainty. Both failures produce School Slop (this term refers to student work that is AI-generated rather than AI-enhanced; output that looks complete but reflects no meaningful cognitive effort.) One produces slop through compliance, while the other through perceived abandonment.

The balance isn’t a middle point between structure and freedom. It’s a design that places cognitive load on the thinking rather than on figuring out what’s expected. A well-designed process gives students clarity about where they are, what they’re doing next, and where they can get support. It doesn’t predetermine where they’ll end up. It scaffolds the journey while leaving room for the thinking to go somewhere real.

This is an autonomy and competence problem. A rigid process communicates that student judgment doesn’t matter. A loose one communicates that the teacher isn’t invested enough to be present. Both erode the walls of the effective classroom. What students need are frequent check-ins, strategic feedback at the right moments, direct teaching, and a clear understanding of how AI supports the class's goals without replacing the cognitive work that builds capability. It’s so much more than content.

There’s also a longer-term goal on the horizon worth designing for. The goal was never compliance with a process. It was students internalizing the thinking moves behind it, adapting them to new contexts, and eventually designing their own. A student who has genuinely absorbed a process doesn’t need the scaffold anymore because the scaffold has become instinct. That’s also where wise AI use lives: not in following rules about what AI can and can’t do, but in a student who knows their own thinking well enough to know when AI is enhancing it and it’s replacing it.

Spot the pitfall early:

You might see every student moving through the same steps at the same pace, regardless of where their thinking actually is, with no room for a different interpretation to land. Fix it by designing for clarity about expectations, not predictability of outcomes. Students should know what’s expected at each step, but the process should leave genuine room for their thinking to go somewhere unexpected. Turn steps into decision points: “If your draft is coherent, move to revision. If it’s messy, use AI to propose two structures, then justify which one you chose and why.”

You might hear students say “I don’t know what you want” or “can I just use AI to figure out the next step?” Fix it by being present during the work and building in frequent check-ins: “At each checkpoint, I’ll spend two minutes with each group. Come ready to tell me where you’re stuck.” Strategic feedback at the right moment matters more than a perfectly sequenced process. Make it explicit how AI supports the class’s goals: students should be able to answer what AI is helping them do and what thinking stays theirs.

You might notice students who can follow the process but can’t explain why any step matters, or students who turn to AI the moment the structure gets ambiguous. Fix it by planning for fading explicitly: “By week four, students design their own process for this task type using the thinking moves we’ve practiced together.” We want students to internalize the thinking moves well enough that the scaffold becomes a natural part of how they operate.

6) How we assess learning

This is where every other pitfall converges. Undefined AI use produces polished work with no thinking behind it. Policing replaces the coaching that would have caught it early and kept thinking with the students. Silent steps mean students completed the process without understanding it. Post-mortem documentation gets retrofitted to match the product. Rigid and loose processes push students through without checking whether understanding is being built.

And now, at the end, if the only thing being evaluated is the final product, every one of those design failures gets rewarded and reinforced with a grade. A rubric that can't tell the difference between a thinking student and a polished AI draft isn't evaluating learning. It's evaluating presentation. This is a relevance problem: when the grade doesn't value thinking, the assessment tells students what actually mattered, and it wasn't their ideas.

Thinking = effort.

When thinking doesn’t show up in the grade, students get a clear signal: the cognitive work doesn’t count. And a student who has figured out that thinking doesn’t count will find the fastest path to a product that looks like it does.

The fix is a holistic evaluation: criteria that only a thinking student can meet. Justified decisions. Evidence of revision. Original connections that held up in conversation. A student who can explain their choices in a two-minute conference is demonstrating something a polished AI draft never could.

Spot the pitfall early:

You might see AI-first drafts becoming final drafts because revision is optional, and process evidence that gets collected but carries no weight in the grade. Fix it by grading process and product, even lightly, so thinking has perceived value: “Twenty percent of this grade is your decision trail: where you changed direction and why.” Make revision count explicitly. A revised draft with a rationale earns more than a polished first attempt.

You might hear students say "I don't remember why I changed that" or "the AI suggested it and it seemed better" when you ask them to explain a decision in their own work. Fix it by building a process journal from the start so thinking is captured as it develops, not reconstructed after the fact. Example assignment prompt: "Alongside your final product, submit a journal that shows where your thinking started, where it changed, and where you landed." Example conference prompt: "Open your journal. Walk me through one moment where you changed your mind."

You might notice a rubric that rewards polish and completeness more than decisions and reasoning, where a polished AI draft scores the same as a well-thought-out one. Fix it by adding criteria only thinking can meet: “Your rubric includes one criterion your final product alone can’t satisfy: explain one original connection you made that AI didn’t give you.”

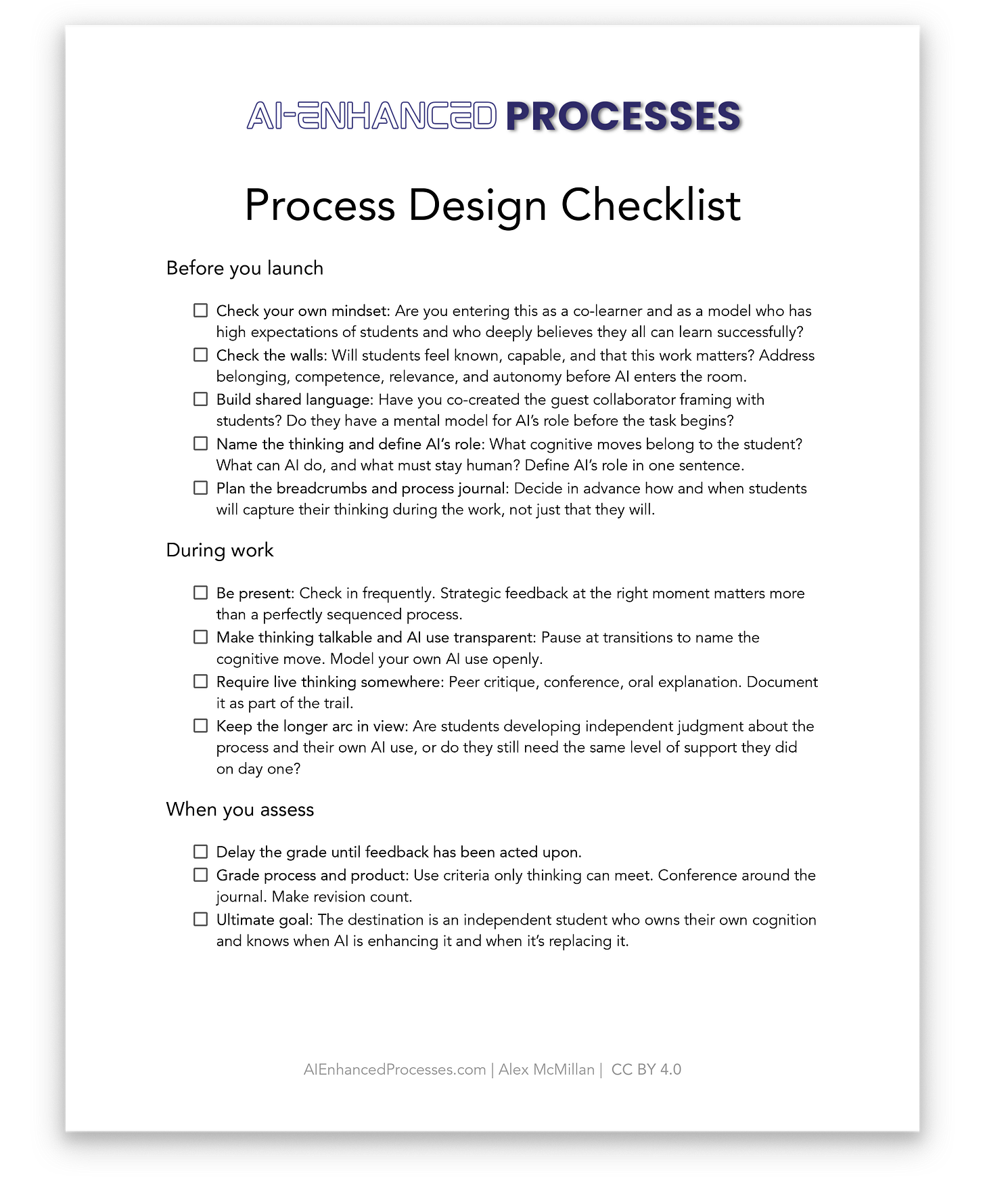

Monday-Ready Design Checklist for Process-Based Learning with AI

Each pitfall above comes with a concrete fix. If the look-fors resonated, here’s a free downloadable checklist PDF that pulls all six into one diagnostic you can use before your next learning task with AI in the room. Print it, post it, share with a colleague, bring it to a planning meeting with your team, or keep it on your desk.

Five Readings Worth Your Time

Self-Determination Theory, by Edward Deci and Richard Ryan, is the research backbone behind the load-bearing walls I describe in this article. What I appreciate about their work is how cleanly it names the psychological conditions for human motivation and growth: autonomy, competence, and relatedness. It’s a simple framework that holds up in classrooms every day.

“Tomorrow is Today: The classroom I’m afraid of”, by Alex Kotran, is one of the clearest pieces I’ve read on AI in education. He warns that AI doesn’t automatically create equity for all; in fact, it can entrench inequity if we keep defaulting to a “pedagogy of poverty” and skip serious teacher training.

10-25, by David Yeager, connects nicely to the Carol Dweck mindset work I’ve mentioned in other posts. Yeager is a contemporary and collaborator of Dweck’s, and he makes a great point about what young people are scanning for constantly: belonging and respect.

The Paradox of Choice: Why More Is Less, by Barry Schwartz. This book connects directly to the autonomy wall and pitfall 5. Schwartz makes a counterintuitive point that shows up in classrooms every day: more options do not automatically create more freedom. Too many choices can overload students, increase second-guessing, and push them toward avoidance or shortcuts.

Culturally Responsive Teaching and the Brain, by Zaretta Hammond, reframes relevance as a neurological condition, not a motivational one. Hammond’s argument is that the brain won’t take on the cognitive risk of hard thinking unless it first feels safe and seen. If students don’t sense that a task connects to something real in their lives, the brain treats it as low-stakes noise and looks for the path of least resistance. That path, right now, is AI.

AI Disclosure

This article took 9-10 hours to write. There was a lot of effort put into it in the form of brainstorming, researching, revising, word-smithing, editing, and creating visuals.

The ideas are mine, mostly drawn from my book and previous articles as well as the literature that I listed in the reading section above.

I used Claude Opus and Sonnet 4.6 and Gemini 3 Pro with Deep Research to test my thinking against the broader literature, then worked section by section with AI as a collaborator. I found Claude Sonnet 6.2 did the best job with understanding the intentions and giving me feedback on how to adjust and make it digestible for readers. Then I used ChatGPT 5.2 Thinking to check that it still sounded like me. ChatGPT was sort of my top-level editor that I ran everything by to make sure it all made sense and the thread running through the article was clear.

Thank you for reading my article! If you liked it, please share it with someone who you think would enjoy its content.

See Me Speak

This academic year, I have a few more public speaking engagements lined up where I will be speaking about the above ideas and more. I hope to see you in person and talk more about AI-enhanced processes!

21 CL, Hong Kong - Breakout sessions

AIFE, Yokohama - MC and breakout sessions

AI in Action, Beijing - Keynote and breakout sessions