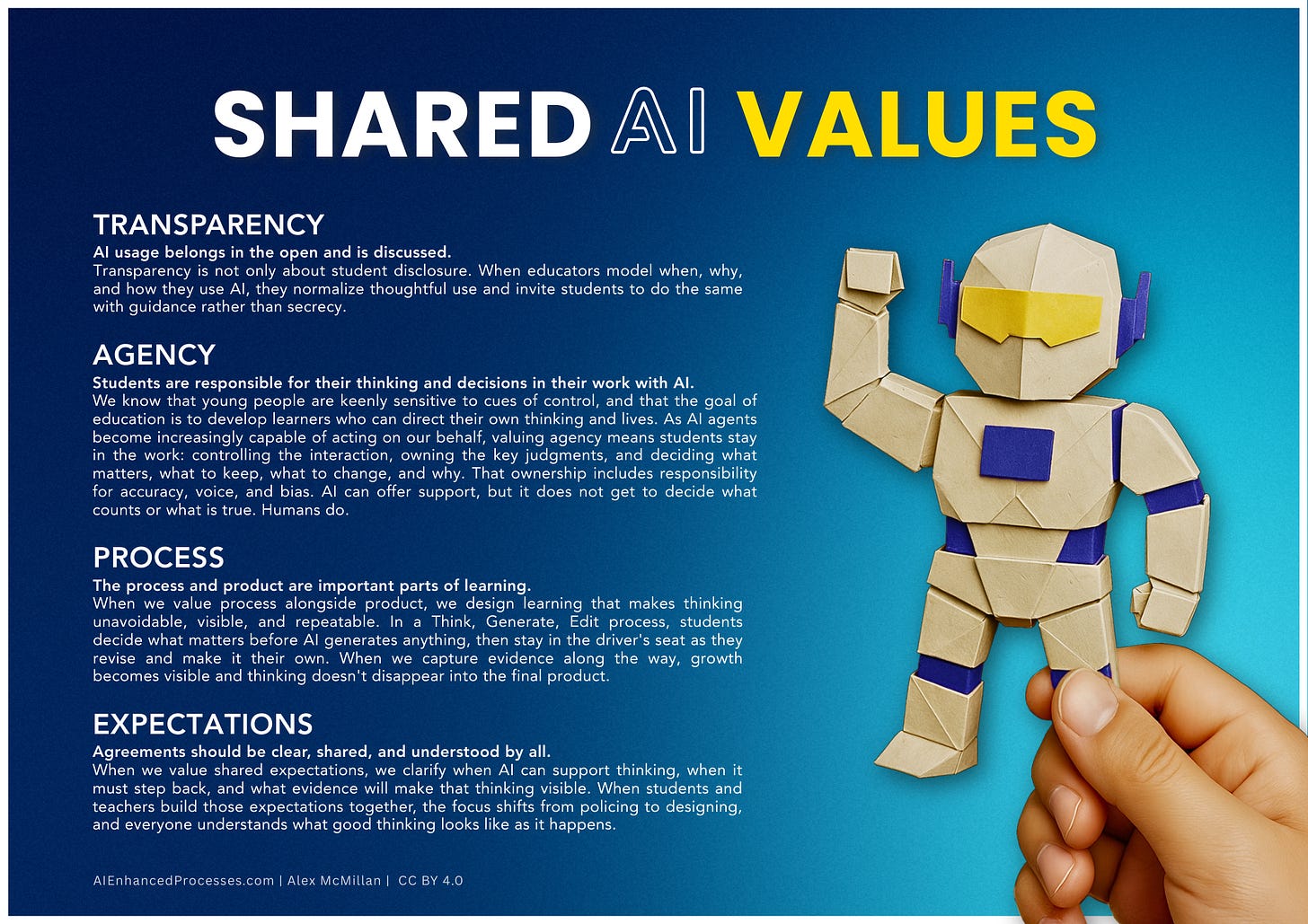

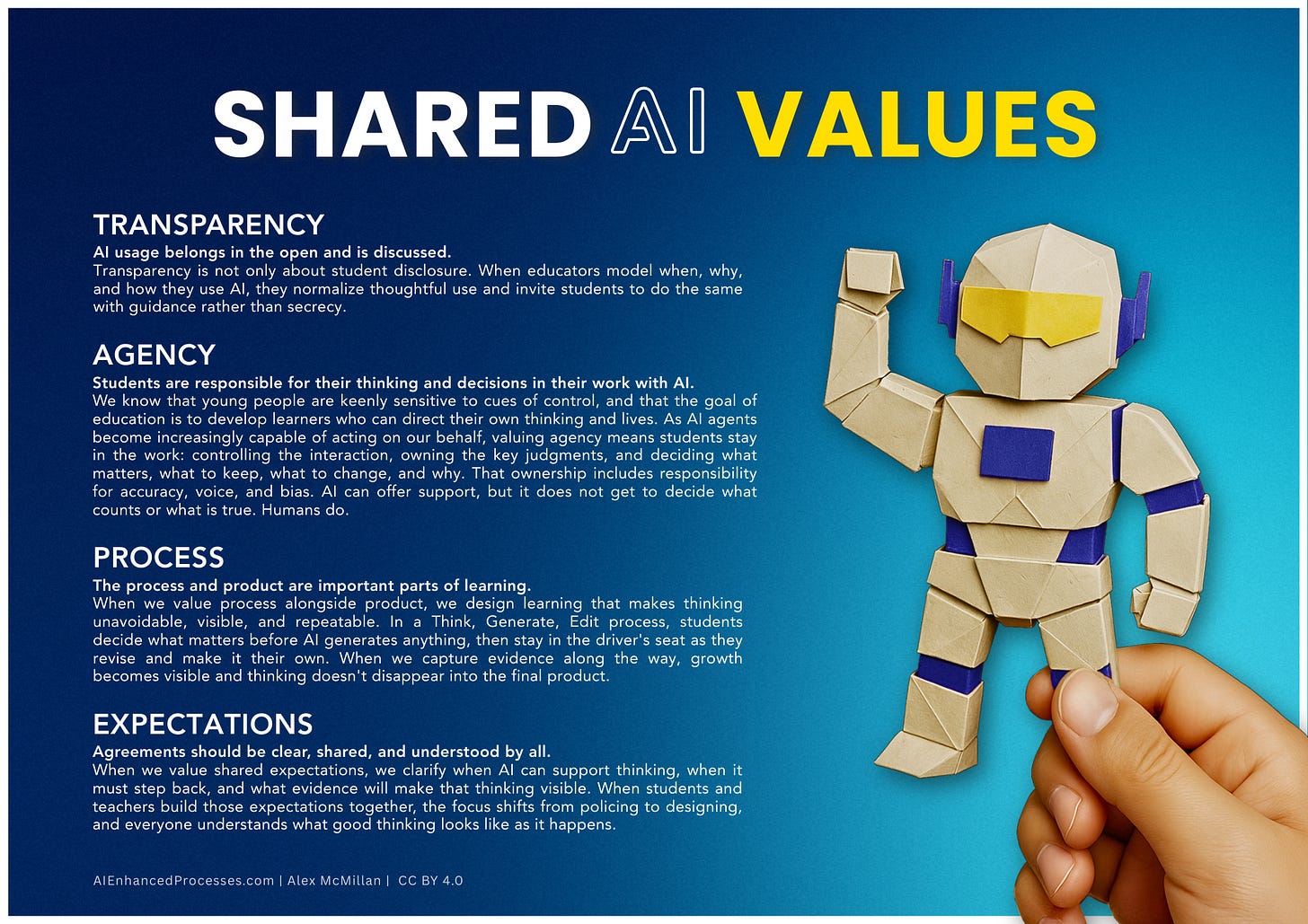

Shared AI Values

Core values to guide our use of AI to enhance our learning.

TL;DR

Here’s a free poster you can use with students to build language around your shared AI values.

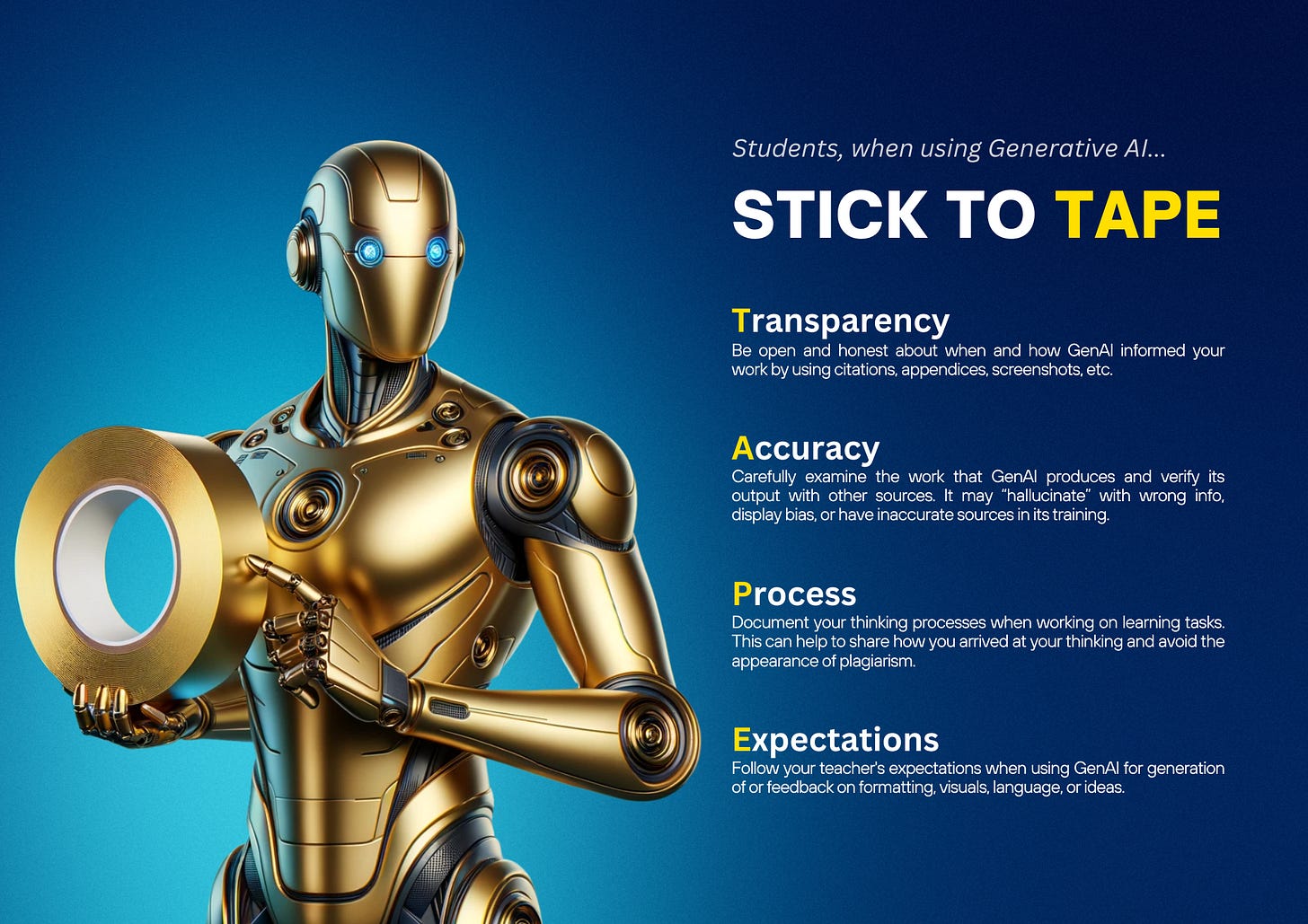

TAPE, Two Years Later

In 2023, I stood in a room in Hong Kong at Apple’s Future of Learning HK conference and scribbled down four words that would eventually shape everything I built after it.

Holly Clark was facilitating. The conversation was electric. And somewhere in that room, TAPE was born: Transparency, Accuracy, Processes, Expectations. A set of values I hoped teachers would hang on their walls or drop into a presentation slide so students would have something concrete to hold onto when they reached for an AI tool.

The original framing was student-facing. Students, when using Generative AI... STICK TO TAPE. It was direct, practical, and honestly, a little compliance-flavored. Not wrong, just early. A lot has changed since then. I wrote a book. I spent two years in classrooms and conference halls across Asia testing these ideas with real teachers and real students. And somewhere during those years, TAPE grew up.

“Transparency”

The original graphic asked students to be transparent about when and how they used AI. That still matters. But I missed something important: teachers are users too.

When educators use AI in their planning, their feedback, and their communication, and say nothing about it, they’re modeling secrecy. Students are watching how adults navigate these tools, and if the adults are quiet about it, the implicit message is that AI use is something to hide or manage rather than discuss openly.

Transparency now runs in both directions. It’s a professional practice, not just a student disclosure policy. When you model your own AI use openly, you normalize thoughtful use and invite students into the same kind of reflection. You become a co-learner rather than an enforcer.

“Accuracy” to “Agency”

This is the change I want to spend the most time on because it wasn’t obvious to me at first. Accuracy was always present in the information literacy chapter of my book. Can students verify what AI produces? Is it factually sound? Those questions are still real and still worth asking.

But accuracy is narrow. It asks about the output. It doesn’t ask about the learner.

After two years of developing my writing around AI-enhanced processes, I kept returning to a deeper question that accuracy alone couldn’t hold: who is doing the thinking? That question runs through everything I’ve built. A well-designed learning experience leveraging Think, Generate, Edit, has an answer to it at every stage. The breadcrumbs of student thinking that they leave behind are evidence of it.

The word I needed wasn’t accuracy. It was agency because it asks whether the student is still in control. It holds information literacy inside it, because you can’t exercise real agency over a claim you haven’t verified. But it goes further, into ownership, judgment, decision-making, and what it actually means to learn something rather than generate something.

We’re entering a phase where AI agents don’t just suggest ideas from a chat window but act on our behalf, completing tasks, making decisions, and taking initiative in ways that used to require a human in the loop. That changes what students need from us, because the question is no longer just whether they used AI, but whether they stayed in the work at all. Agency isn’t just a classroom value for learning (like it long has been). It’s the skill of staying the author of your own thinking when the tools around you are increasingly capable of thinking for you.

In another article, I suggested that we think of AI as a guest collaborator. A synthetic guest in our classrooms who can do remarkable things. But the student is the host. The host sets the agenda. The host makes the calls. Agency is what makes that true. You’d be surprised at how often students think they have to comply with AI rather than work with AI.

A great book that explores how AI is less of a tool due to its agency is Nexus by Yuval Noah Harari.

“Process”

Of all the letters in TAPE, P was always the one I felt most strongly about and the one I felt the original graphic undersold.

Process is not a checklist. It’s not a workflow someone hands you. A genuine AI-enhanced process names the kinds of thinking students are expected to do, structures the moments when AI can support that thinking, and captures evidence that real thinking happened along the way. Over time, those structures fade as students internalize the moves and begin to work more independently. That’s the goal. Internalization. Automaticity. Students who don’t need the scaffold anymore because they’ve built the capacity.

Without an intentional process, there are a lot that can go wrong, like AI doing the thinking for kids, not with them. That’s school slop, and it’s what I’ve spent the last few years helping teachers design against.

The documentation “breadcrumbs” concept lives here. Lightweight traces of thinking, a quick voice memo, an annotated draft, a before-and-after comparison, that make the process visible without turning documentation into a second assignment. If there are no breadcrumbs, there’s no way to know whether the student was thinking or whether the AI was doing it for them. And more importantly, there’s no way for the student to know either.

“Expectations”

Expectations were always the structural piece of the earlier graphic, and that hasn’t changed. But the language around them has tightened. Expectations are not rules handed down after a policy meeting, and I felt that the earlier version of the poster might have had that ethos to a certain degree. Expectations in this case are shared agreements, built through clear language, that name the thinking moves expected at each stage of the work. They clarify when AI can support those moves and when it must step back. They specify what evidence will make student thinking visible.

My friend and colleague Nick Soentgerath pushed this idea further in a way that stuck with me: when students and teachers evaluate together what skills and knowledge a task is actually trying to develop, they can make informed decisions together about where AI supports that goal and where it gets in the way. That co-creation has a huge impact on learning, motivation, and the students’ mindsets. Students are far more likely to honor expectations they had a hand in shaping than rules that arrived from above. Shared agreements, built with students rather than handed to them, are the difference between a policy and a practice.

Clear expectations protect agency by design, not by policing after the fact. If a teacher has to chase down evidence of student thinking after an assignment is submitted, the expectations weren’t clear enough upfront. The design failed before the student did.

So here’s where I landed.

The new graphic is called Shared AI Values. I decided to step away from the acronym being the front and center. There was sort of an explosion of AI framework acronyms to the point that it became sort of too much.

In this case, I wanted to think of it as community agreements. The “we” matters because it puts teachers and students on the same side of the same question: how do we use these tools in ways that keep humans, and human thinking, at the center?

TAPE started as a spark in a room in Hong Kong. Years later, it’s a series of values I’ve tested in classrooms across Asia, built into a book, and kept refining every time a teacher asked me a question I couldn’t fully answer yet.

Honestly, the values haven’t changed, but my understanding of them has, and as this technology continues to evolve, so too can the application of our long-held values.

Download the new graphic below. Share it. Adapt it for your context. And if you use it, I’d love to know what conversations it starts.

Monday-Ready Ways to Use This Poster

Student discussion prompt:

“Look at the poster. Pick one value you think is hardest for you during assignments. What would it look like in your work today? Give one concrete example: ‘If I’m protecting Agency, I will ___ instead of ___.’”

Teacher modeling move:

“I’m going to show you what transparent AI use looks like on a real task.”

I’ll project a tiny example:

My prompt: “Give me 5 possible headlines for this paragraph.”

What I used: headline #3 (with edits)

What stayed human: the claim + the final choice

Then: “Notice what I’m doing: I’m not hiding AI. I’m also not letting it make the decisions that matter.”

Breadcrumb example:

Value Tag Breadcrumb (students add one sentence at two points)

Midway checkpoint: “Right now I’m using Process by ___.”

End checkpoint: “The biggest decision I made (Agency) was ___. I can point to where it shows in my work: ___.”

AI Disclosures

One of the most repeatable practices you can bring into your classroom tomorrow is the use of AI disclosure statements. You’ve probably seen them at the bottom of articles. Mine tend to run long (occupational hazard). But a student’s disclosure can be three sentences. The word count isn’t what I want to emphasize; what matters is that it’s honest, and that writing it requires them to actually think about what they did.A disclosure is a breadcrumb. It makes the process visible. And a well-written one touches all four values.

Transparency is the obvious one. The disclosure gets interesting when students have to answer: where did AI show up?

For Agency, what did you keep, cut, push back on? Why? If a student can’t answer that, it’s useful data for both of you.

Process shows up when students describe their thinking moves. “I used ChatGPT to help write this” tells you nothing. “I outlined my argument first, then rewrote the conclusion myself” tells you everything.

And Expectations close the loop. Did you follow the agreements your class built together? A disclosure is a natural place for students to name that, without you having to police it.

When students write disclosures regularly, they start thinking about their process while it’s happening. That’s the practice we’re after.

Thanks for reading! If you liked this material, please share it with a friend who you think might benefit from it.

A great practical way to introduce AI routines in the classroom. Great examples too. I think all components should appear at the Process stage. Process is the all encompassing decisions and intentions before during and after using AI;..what kind of agency did you (student) take during your conversation with AI? What expectations did you maintain or change (did you have an Aha moment that altered your main thesis?) Were you transparent if that actually happened and acknowledge the influence of AI?